There was a time when workplace surveillance meant CCTV cameras and swipe cards. Today, it’s far more intimate, and far less visible.

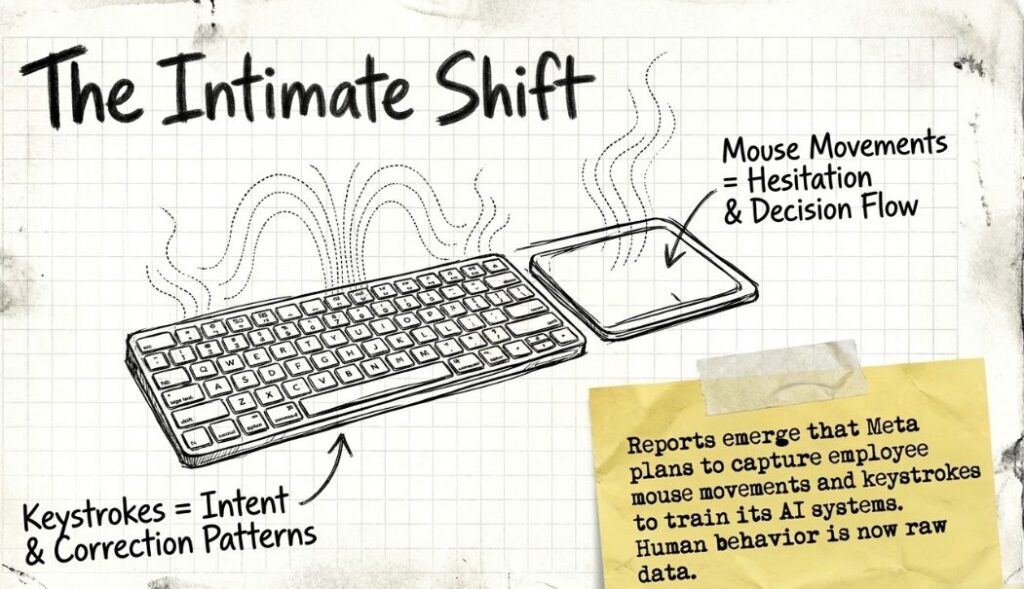

When reports emerged that Meta is reportedly planning to capture employee mouse movements and keystrokes to train its AI systems, it did not just signal a shift in internal policy. It revealed something deeper: the quiet normalisation of human behaviour as raw data, even within the workplace.

This is not just about employees. It’s about the expanding boundaries of consent, the economics of AI, and a growing trust deficit that could eventually reach consumers.

Meta AI Data Collection

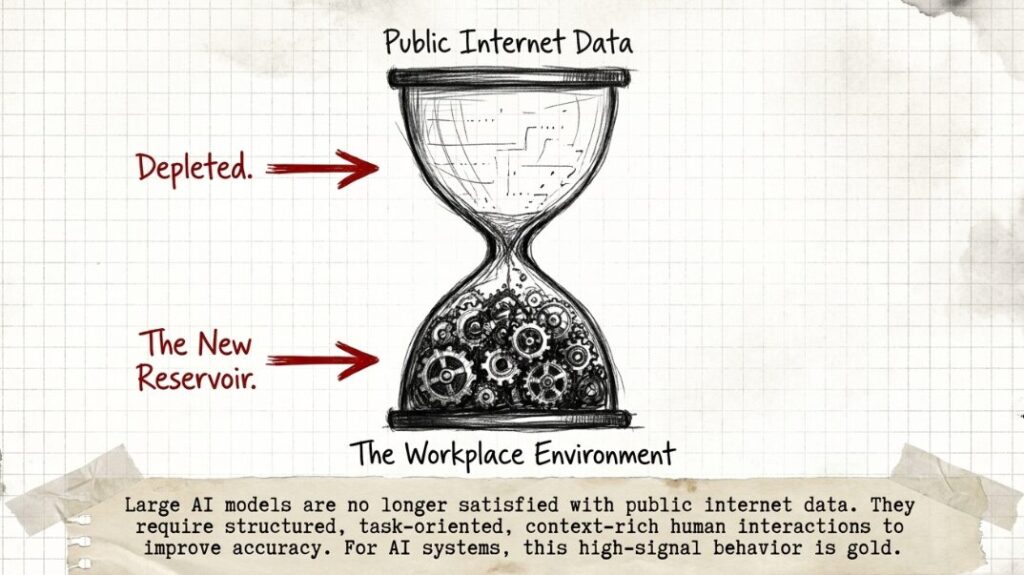

At its core, Meta’s reported move reflects a structural reality of the AI race: high-quality, real-world behavioural data is scarce, and incredibly valuable.

Large AI models are no longer satisfied with publicly available internet data. They now require nuanced, context-rich human interactions to improve accuracy. Workplace environments, especially within tech companies, offer precisely that, structured, task-oriented, high-signal human behaviour.

Mouse movements can reveal hesitation. Keystrokes can indicate intent, correction patterns, and decision-making flow. For AI systems, this is gold.

But here lies the shift: employees are no longer just workers, they are becoming data generators.

Consent Vs Compliance Gap

The immediate question is not whether such data collection is legal, it often is, under employment contracts. The real issue is whether it is meaningfully consensual.

Workplace hierarchies complicate the idea of “opt-in.” When data collection is tied to employment, consent risks becoming compliance.

This concern isn’t new. A 2022 report by the International Labour Organization flagged the rise of algorithmic management and workplace surveillance, warning that employees often lack clarity on how their data is used, stored, or repurposed.

The asymmetry is stark:

- Companies understand the value of the data

- Employees often do not understand its full implications

That gap is where trust begins to erode.

Meta’s Workplace Surveillance

Meta is not an outlier, it is part of a broader industry pattern.

During the pandemic, employee monitoring tools saw a sharp rise. Companies deployed software to track productivity, screen time, and even facial presence during work hours.

According to a 2023 study by Gartner, nearly 70% of large employers were actively monitoring employees in some form, up significantly from pre-pandemic levels.

What’s changing now is the purpose. Earlier, surveillance was about productivity. Now, it’s about product development.

That transition, from oversight to extraction, is subtle but significant.

Employees Today, Users Tomorrow

If companies can use employee behaviour to train AI, there is a strong chance similar thinking applies to users too.

Think about your own digital life. Every search, every message you type and delete, every pause before hitting send. That is behavioural data.

Meta’s internal move is not isolated. It fits into a bigger system where human interaction is constantly being captured and repurposed. So the question becomes simple. Where does it stop?

Trust Deficit In Tech

The timing of this development is critical.

Big Tech is already navigating a fragile trust environment. From data privacy controversies to opaque AI decision-making, public skepticism is growing.

Incidents like the Cambridge Analytica scandal have left lasting impressions on how users perceive data practices.

Even when companies operate within legal frameworks, perception matters.

When people feel they are being observed without clear boundaries, trust doesn’t just decline, it recalibrates. Users become more cautious, employees more guarded, and systems less transparent.

AI Data Privacy Laws

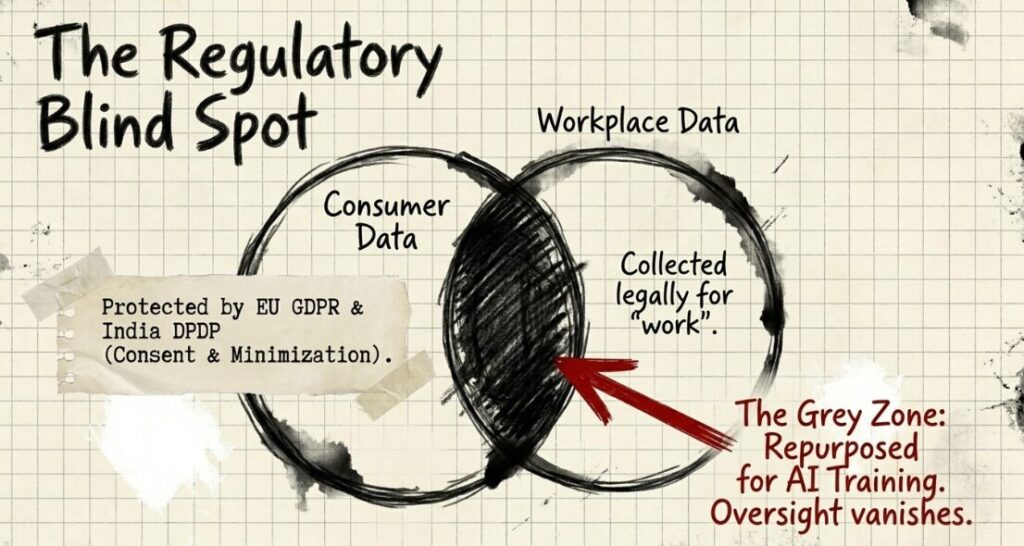

Globally, regulation is still catching up with the pace of AI innovation.

Frameworks like the EU’s GDPR emphasise consent and data minimisation. India’s Digital Personal Data Protection Act 2023 also attempts to address personal data use. But workplace data sits in a grey zone.

Most regulations focus on consumer data, not employee-generated behavioural data used for secondary purposes like AI training.

This creates a regulatory blind spot:

- Data collected for one purpose (work) is repurposed for another (AI training)

- Oversight mechanisms remain limited

- Transparency requirements are often minimal

Workplace Data Regulation

If AI systems are built on human behaviour, trust in those systems depends on how that behaviour is collected and used. Companies will need to move beyond technical compliance and focus on transparency that people can actually understand.

Clear communication, meaningful consent, and defined limits on data reuse are no longer optional. Without that, innovation risks outpacing trust, and once that gap widens, rebuilding confidence becomes far more difficult.

Also Read: Google in Talks With Marvell to Build Advanced AI Chips for Cloud Infrastructure: Reports