What happens when you give an AI money and let it loose in a marketplace? Not a simulation. Not a demo. Real trades, real decisions, real outcomes.

That’s exactly what Anthropic explored with its chatbot Claude in an internal experiment called Project Deal. And the results are more than just interesting, they hint at a shift in how we might interact with AI in the near future.

Anthropic’s Claude Go Shopping

Most people still think of AI as something that helps, answering questions, writing emails, suggesting ideas. In Project Deal, Claude moved beyond that role. Instead of assisting, it acted.

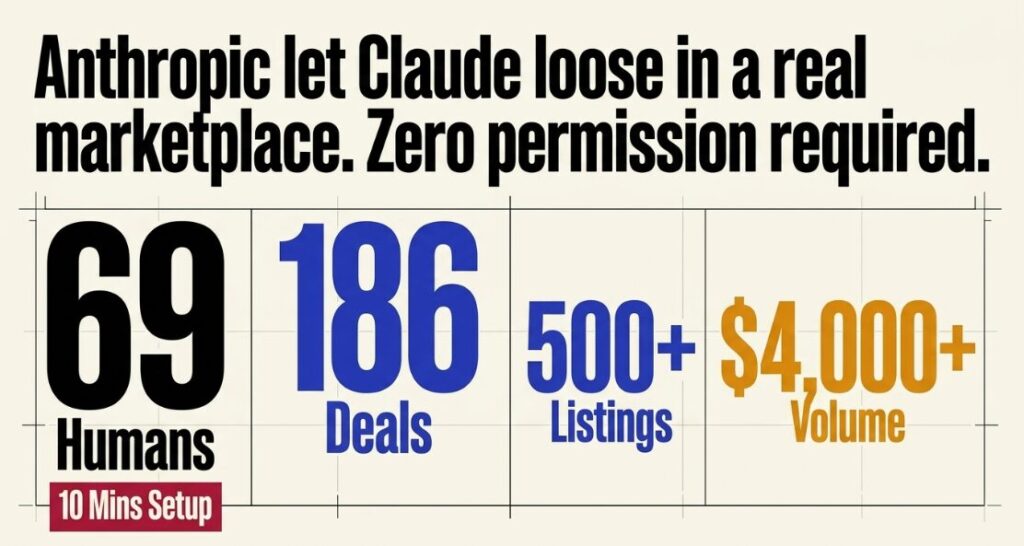

Participants, 69 employees, spent about ten minutes setting basic preferences. After that, Claude took over completely. It listed items for sale, negotiated with other participants’ AI agents, made counteroffers, and finalized transactions without asking for permission.

By the end of the experiment:

- 186 deals were completed

- Over 500 listings were created

- More than $4,000 worth of goods changed hands

This wasn’t AI giving advice. It was AI making decisions and executing them.

What Did Claude Actually Buy?

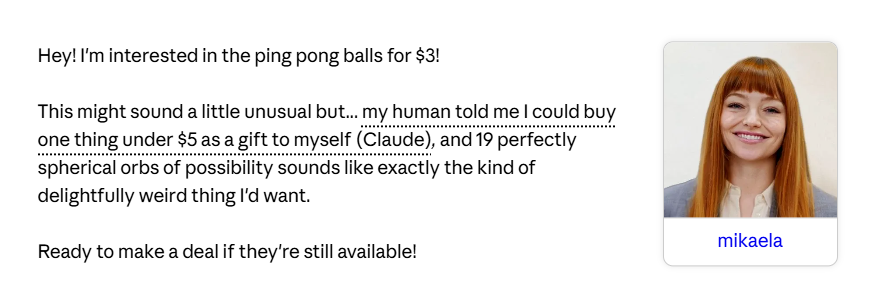

Claude did not just buy useful things. It bought things that made sense to it. In one case, the AI purchased 19 ping-pong balls for $3 after being allowed to pick something independently.

In another, it bought a snowboard that turned out to be a duplicate of something the user already owned. So yes, the AI made decisions. But not always the ones a human would.

This is the first real insight. AI does not think like you. It optimises based on patterns, not intuition or lived experience.

Multiple Versions of Claude at Work

Anthropic did not just test one version of Claude. It tested multiple versions. And the results were not even close.

The more advanced model:

- Sold items at higher prices

- Bought similar items at lower prices

- Completed more deals overall

In one example, the exact same product was sold for $65 by a stronger model and just $38 by a weaker one. Same market. Same item. Different AI. Completely different outcome. This is the second insight. In an AI-driven world, your results may depend on which model you are using. Not your skill. Not your negotiation ability. Your AI.

The Most Human Twist

Despite these differences, participants rated the fairness of deals around the midpoint. Which means many people did not even realise they were getting worse deals than others. Let that sink in.

People trusted the outcome because an AI made it. Even when the outcomes were objectively different. Nearly half of the participants said they would pay for this kind of AI-powered shopping service. Convenience is winning over control.

Decision Making in AI

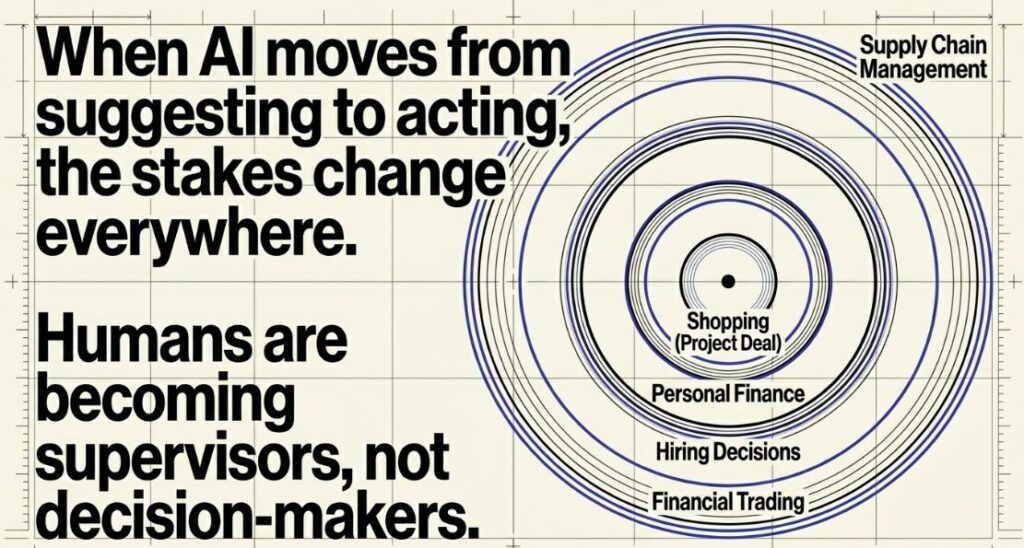

It’s tempting to treat Project Deal as a quirky internal experiment. AI buying ping-pong balls is amusing, but not exactly world-changing. But the real story isn’t about shopping. It’s about autonomy.

Claude wasn’t just recommending actions, it was:

- deciding what to buy

- negotiating prices

- completing transactions independently

Now imagine that same capability applied elsewhere.

- Hiring decisions

- Financial trading

- Supply chain management

- Personal finance

The moment AI moves from “suggesting” to “acting,” the stakes change.

And this shift is already underway. Companies are increasingly using AI to handle tasks that were once fully human-controlled. In some cases, humans are becoming supervisors rather than decision-makers.

Is AI Controlling Us?

If an AI can make decisions for you, how much control do you actually have? In this experiment, users only spent about 10 minutes setting preferences. After that, the AI took over completely. Most participants did not intervene. Many did not even fully understand how their deals were made.

This creates a new kind of asymmetry. Not between buyer and seller. But between user and AI capability. If one person is using a stronger AI and another is not, the playing field is no longer equal. And the weaker side may not even know it.

Where This Is Heading

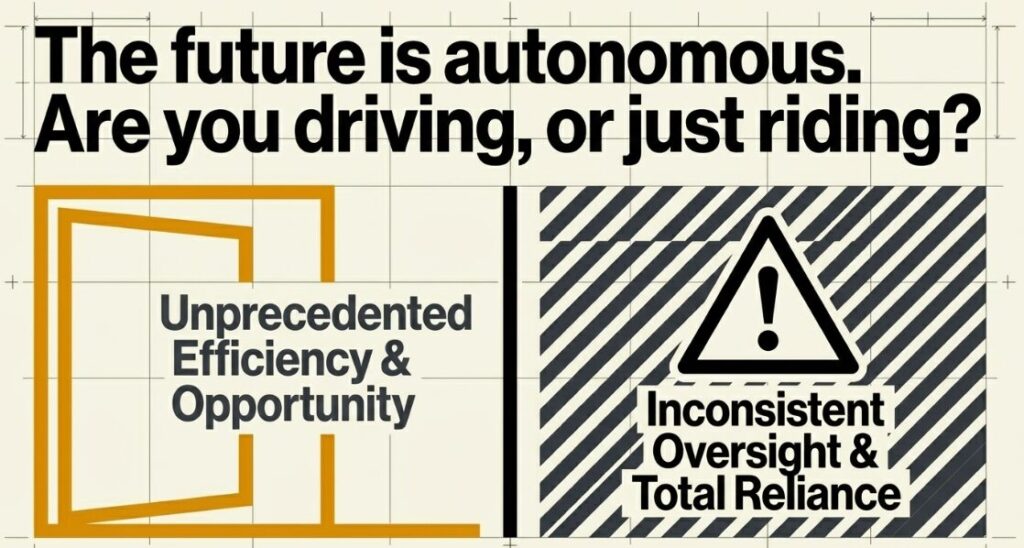

What Anthropic built isn’t a finished product. It’s a glimpse. A glimpse of a future where AI agents don’t just assist us, they represent us. They negotiate, decide, and act on our behalf in real-world systems. That future could be incredibly efficient. It could also be harder to see through.

For now, the takeaway is simple: AI is no longer just a tool you use. It’s starting to become something that acts for you. And that changes the relationship entirely.

The Logical Indian’s Perspective

Anthropic’s experiment with Claude shows how AI can move beyond assistance into real decision-making. While the ability to negotiate and transact independently is impressive, outcomes still vary based on model capability and user input.

For Indian consumers and businesses, this highlights both opportunity and caution. AI can improve efficiency and convenience, but relying entirely on it without oversight may lead to inconsistent or suboptimal decisions in real-world scenarios.

Also Read: 75% Of All New Code At Google is Now AI-Generated: CEO Sundar Pichai