This isn’t about screen time. It’s about how much control users really have.

For years, the debate around social media has been framed as a matter of discipline. Parents were told to monitor usage. Teenagers were told to log off. Responsibility, we were told, rested with the individual.

But recent jury verdicts in the United States against Meta and Google challenge that assumption at its core. They force a far more uncomfortable question into public view. If addiction is engineered, can consent ever be truly free?

Juries in the US have held Meta and Google liable for the addictive design of their platforms and the resulting harm to children’s mental health

This is not just about money, it is about a fundamental shift in how we view the responsibility of companies that shape the minds of our youth.

What’s the Case About?

The rulings mark a turning point not just in law, but in how we understand the digital world.

The California case was led by a young woman named Kaley, who entered the digital world of YouTube at age six and Instagram at nine. Her legal team argued that these platforms were not just websites but were intentionally designed addiction machines.

Features like infinite scrolling, beauty filters, and autoplaying videos were engineered to keep children hooked for hours on end.

Simultaneously, the state of New Mexico took Meta to court, alleging the company knowingly misled the public about safety while failing to stop predators from exploiting children. They argued that profits were consistently prioritised over child safety.

The jury agreed. It found that design itself, not just content, could cause harm. In doing so, it pierced a long-standing legal shield that allowed tech companies to distance themselves from the consequences of their platforms.

The Illusion of Choice

At the heart of this shift lies a deeper tension between choice and influence.

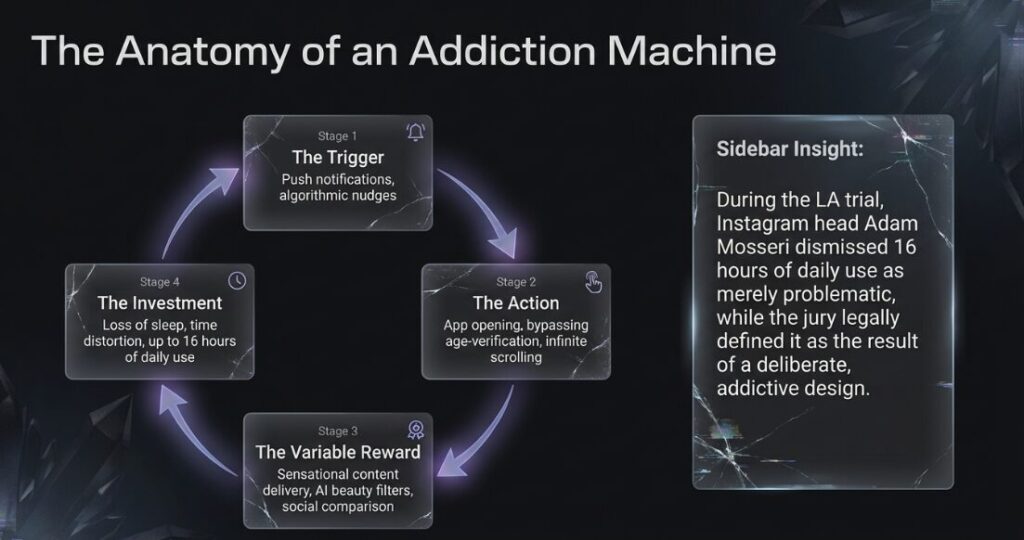

Social media platforms are often presented as tools that users can pick up or put down at will. But internal mechanics tell a different story. Features are tested, refined, and optimised to keep users engaged for as long as possible. Notifications arrive at carefully timed intervals. Content is personalised to trigger emotional responses. The result is an environment where disengagement becomes increasingly difficult.

For adults, this may translate into distraction or fatigue. For children and teenagers, whose cognitive and emotional frameworks are still developing, the impact can be far more serious.

Experts in the trials described cycles of reinforcement that mirror addictive behaviour. The more time a user spends, the more the system learns, and the more compelling the content becomes. Over time, what feels like free choice begins to look more like guided behaviour.

What Did Meta & Google Say?

The response from the tech giants was swift and firm. Both Meta and Google expressed deep disagreement with the findings and confirmed their plans to appeal the decisions. Meta argued that teen mental health is a ‘profoundly complex’ issue that cannot be blamed on a single application.

Meanwhile, a spokesperson for Google claimed that the case misunderstood YouTube, which they define as a responsibly built streaming platform rather than a social media site. However, advocates and world leaders, including UK Prime Minister Sir Keir Starmer, have welcomed the decisions as a necessary step toward change.

How Social Media Hooks the Brain

The trials brought into focus how these systems operate at a psychological level.

Experts described cycles of reinforcement that keep users coming back. Each scroll, each like, each new video offers a small reward. Over time, the brain begins to expect and seek out that reward.

For adults, this may lead to distraction or fatigue. For children and teenagers, whose minds are still developing, the effects can be deeper.

For Kaley, this addiction led to clinical depression and body dysmorphia, a condition that made it impossible for her to see herself clearly. The platforms’ focus on profit and time spent often comes at a high psychological cost for young users.

This is not just usage. It is conditioning.

A Pattern of Harm

This legal fight is fueled by tragic stories of loss. The court heard references to cases like that of 14 year old Molly Russell, who died in 2017 after allegedly being exposed to harmful content online.

Another grieving mother, Ellen Roome, is currently suing TikTok after the death of her son, echoing the sentiment that enough is enough.

Recent research even found that dummy accounts for 15 year olds were bombarded with self harm content despite new safety laws. These trials serve as a voice for families who believe their children were pushed toward danger by relentless, automated algorithms.

What Real Accountability Looks Like

If this moment is to lead to meaningful change, it cannot stop at courtroom victories. It must translate into structural shifts in how digital platforms are built and governed.

First, there is a need for greater transparency. Users and regulators must be able to understand how algorithms prioritise and recommend content. Without this visibility, meaningful oversight remains difficult.

Second, design standards must evolve, especially for younger users. Features that encourage endless engagement may need to be rethought or limited in contexts involving children and teenagers.

Third, independent audits can play a critical role. External assessments of platform behaviour can help identify risks before they escalate into harm.

Finally, digital literacy must become a shared priority. Parents, educators, and users need the tools to navigate online spaces with greater awareness and confidence.

Beyond Outrage

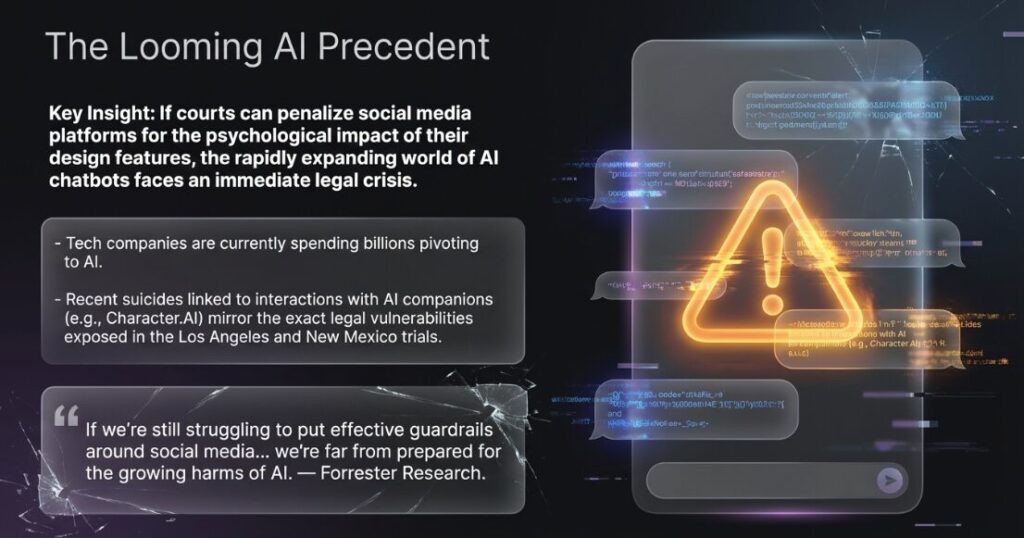

Experts believe these verdicts mark a breaking point between the public and social media companies. With more than 2,400 similar cases already waiting in the wings, the tech industry is facing an uncertain future where they may be forced to completely redesign their features.

The era of these companies using legal loopholes to avoid responsibility for their design choices seems to be fading. As we look ahead, the hope is that these landmark decisions will finally force big tech to prioritise the safety and well-being of children over their massive, multi-billion dollar bottom line.

The Logical Indian’s Perspective

We believe that no profit margin is worth the mental health of a child. These verdicts are a vital wake up call for a world that has allowed technology to outpace our ethics.

We must foster a culture of empathy and real connection, ensuring that the digital spaces our children inhabit are built for safety, not addiction.

Also Read: Israeli Airstrike in Tehran Reportedly Hits Former US Embassy Compound: Reports